- Airflow Installation On Mac

- Airflow Install Providers

- Airflow Installation Windows

- See Full List On Airflow.apache.org

Airflow scheduler executes tasks on an array of workers while following the specified dependencies. There is command line utilities. Similar technology is behind Luigi, Azkaban, Oozie etc. Luigi is simpler in scope than Apache Airflow. Here are the steps for installing Apache Airflow on Ubuntu, CentOS running on cloud server. One may use Apache. $ gpg -verify apache-airflow-providers-airbyte-1.0.0-source.tar.gz.asc apache-airflow-providers-airbyte-1.0.0-source.tar.gz gpg: Signature made Sat 11 Sep 12. 2 – Airflow Installation. Airflow installation needs a home. To establish the same, we can export the AIRFLOWHOME variable using the below command: export AIRFLOWHOME=/airflow. This step is optional unless we wish to setup AIRFLOWHOME at a location other than /airflow. But we will set it up nonetheless for the sake of example. Add a comment. To install it without pip, download airflow zip from git-repo. Unzip the contents and follow the instructions in the INSTALL file. (Steps inside create a virtualenv and then start the airflow installation) These are the steps you will find in INSTALL file: python -m myenv source myenv/bin/activate # required by default.

Airflow Installation On Mac

Airflow is a tool commonly used for Data Engineering. It's great to orchestrate workflows. Version 2 of Airflow only supports Python 3+ versions, so we need to make sure that we use Python 3 to install it. We could probably install this on another Linux distribution, too.

This is the first post of a series, where we'll build an entire Data Engineering pipeline. To follow this series, just subscribe to the newsletter.

Install dependencies#

Let's make sure our OS is up-to-date.

Now, we'll install Python 3.x and Pip on the Raspberry Pi.

Airflow Install Providers

Airflow relies on numpy, which has its own dependencies. We'll address that by installing the necessary dependencies:

We also need to ensure Airflow installs using Python3 and Pip3, so we'll set an alias for both. To do this, edit the ~/.bashrc by adding:

Alternatively, you can install using pip3 directly. For this tutorial, we'll assume aliases are in use.

Install Airflow#

Create folders#

We need a placeholder to install Airflow.

Install Airflow package#

Finally, we can install Airflow safely. We start by defining the airflow and python versions to have the correct constraint URL. The constraint URL ensures that we're installing the correct airflow version for the correct python version.

Initialize database#

Before running Airflow, we need to initialize the database. There are several different options for this setup: 1) running Airflow against a separate database and 2) running a simple SQLite database. The SQLite database is in use in this tutorial, so there's not much to do other than initializing the database.

So let's initialize it:

Run Airflow#

It's now possible to run both the server and the scheduler:

Now open http://localhost:8080 on a browser. If you need to log in, you'll need to create a new user. Here's an example:

Once authenticated, it's now possible to see the main screen:

And that's it - you've now installed Airflow! Optionally, you can take extra steps. Macfamilytree 8 5 18.

Optional#

Start airflow automatically#

In order to start both the webserver and the scheduler automatically on system boot, we'll need three files: airflow-webserver.service, airflow-scheduler.service, and an environment file. Let's break this into parts:

Go to Airflow's github repo and download the

airflow-webserver.serviceand theairflow-scheduler.servicePaste them on the

/etc/systemd/systemfolder.Edit both files. Firstly,

airflow-webserver.serviceshould look like:

Airflow Installation Windows

Now moving on to edit airflow-scheduler.service file, which should look like:

Notice that the user and Group have changed, as well as the ExecStart. You'll also notice that there's an EnvironmentFile that hasn't been created yet. That's what we'll do now.

- Create an environment file. You can call it any name. I chose to call it

envand placed it on the/home/pi/airflowfolder. In other words:

Edit the env file and place the contents:

- Lastly, let's reload the system daemons:

That's it! What's next?#

See Full List On Airflow.apache.org

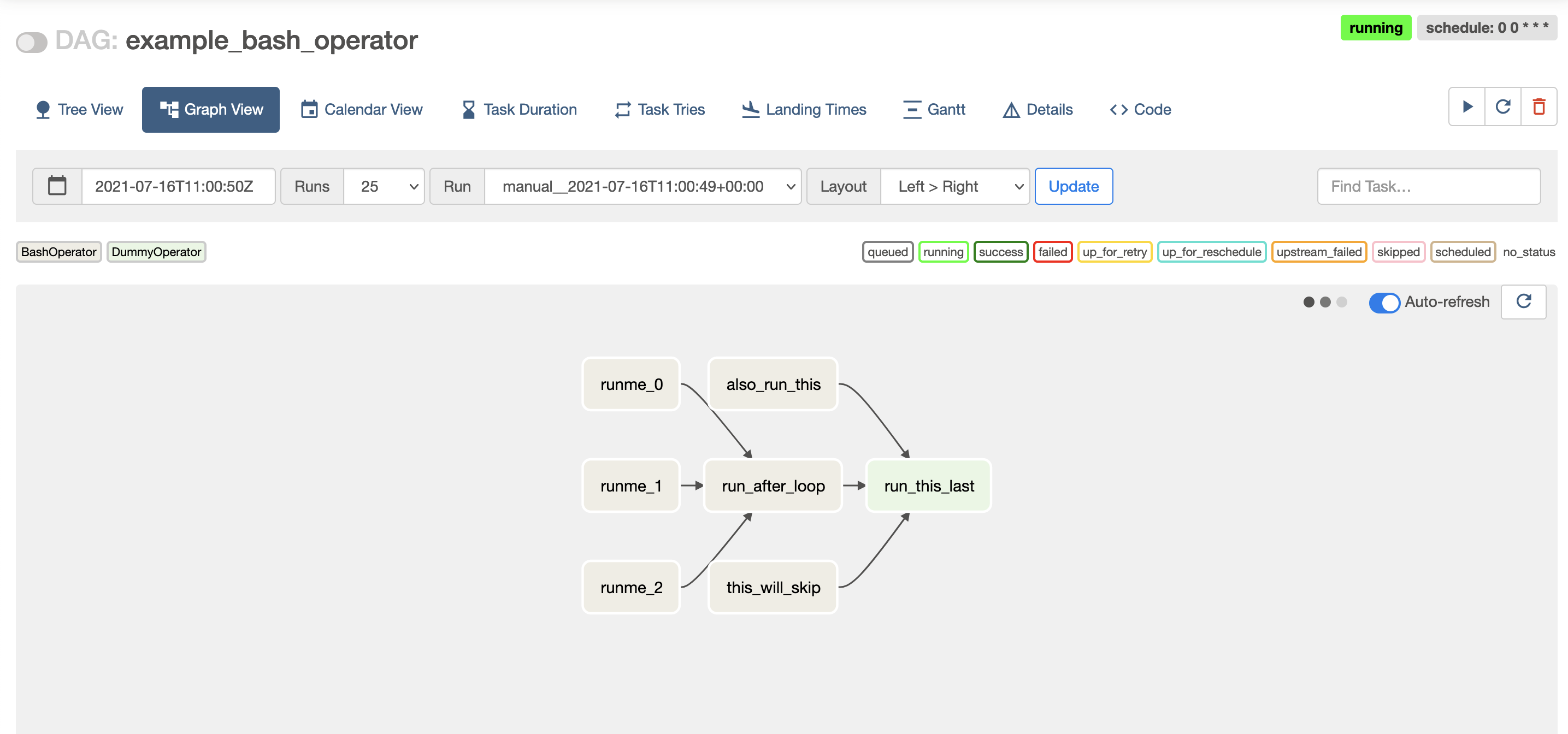

Goat simulator 1 3. In the next blog post of this Data Engineering series, we'll create our first Directed Acyclic Graph (DAG) using Airflow. Subscribe to the newsletter, and don't miss out!